Overview

In a recent project, developed in cooperation with the Cornell University Digital Humanities Fellowship, I have created a topic model of the eighteenth-century journal Das Magazin zur Erfahrungsseelenkunde (1783-1793). This project represents my first attempt at text analysis using MALLET and constitutes the first item in a growing portfolio of work in digital humanities. In what follows, I will describe each of the key visualizations below and provide some key steps taken in processing this data.

Historical Background

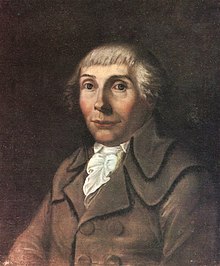

Karl Philipp Moritz (1756-1793) was an author, journalist, and philosopher who has in recent years gained increased relevance as a transitional figure between the Enlightenment and Romantic periods. In the early 1780s, Moritz became fascinated with the emerging intellectual study of the mind, known as ’empirical psychology’ and recognized the importance of carefully documenting and observing cases of psychic deviance from the norms of average life. Thus, over the following ten years, Moritz edited the journal GNOTHI SAUTON oder das Magazin zur Erfahrungsseelenkunde als ein Lesebuch für Gelehrte und Ungelehrte (GNOTHI SAUTON [Know Thyself] or the Magazine for Empirical Psychology as a Reading Book for the Educated and Uneducated). Instead of limiting the scope of this journal to the dominant intellectual figures of his time, Moritz opened the range of authorship to any authoritative figure who could narrate direct experiences of the abnormal, admonishing his readers to stick only to the facts themselves and to refrain from indulging in any moral nonsense. This proposal seems to be accepted with great enthusiasm, and over the coming years, the journal produced a diverse number of cases, from melancholia, criminality, and violence to speculations on the inner workings of the mind.

For a more complete discussion of Moritz, I recommend reading the digital edition of the journal, provided by Sheila Dickson und Christof Wingertszahn. In this project, my aim is to seek out trends in the wide, unorganized collection of text data that comprises the Magazin (hereafter MzE). In particular, I am interested questions such as the following:

- What are the commonly recurring linguistic patterns in the corpus?

- Which authors demonstrate a narrow range of specialization over others?

- How did author interests develop over time?

Topic Interpretation

After pre-processing the text data into segmented files, I then used MALLET to generate 25 topics within the corpus. These topics, shown above, consist of the top fifty words that occur in similar contexts throughout the journal. To better understand these results, I then needed to generate labels that might capture in a single term the focus of those words. Sometimes this would be relatively easy, especially for topics that were contained to a narrow section of the work. However, in most cases, the topic represents a very general approximation of something that arises throughout the entire work and is not easy to pin down to a single idea. In this sense, the topics should not be understood as areas of discourse, but rather rhetorical methods that might occur in some similar contexts. For example, topic 23 ‘heart’ might not seem so instructive, but it does reveal a recurring language about the ‘heart’ and gives clues that might inform the close reader toward a direction of analysis. Perhaps the term ‘heart’ has some hidden significance and this result might provide statistical grounds for an investigation of cases in which those terms occur.

Topic Probability

In this central visualization, the top 50 segments of each topic are plotted corresponding to their probability. In other words, if a segment is plotted in topic 23 with a 0.30 probability, this means that the segment has a 30% chance of arising, given the terms of that topic. Additionally, when the user hovers the mouse over each segment, one can see the topic label, keywords, and body text of that segment, as well as its location in the corpus. On first glance, we can see the following basic trends in the data:

- The model does not produce topics of equal distribution in the corpus. Some topics have a few segments that seem to play a leading role in defining the topic (e.g. 15), which helps to indicate certain texts that stand out in their uniqueness.

- Segments clustered near the higher end of the spectrum suggest a high degree of overlap with the keywords of the topic. In many cases, these may all be segments of a single longer article, all of which contain language that distinguish the article from others.

Full Database

The third page contains an index of each segment according to volume, book, and article. The bars corresponding to each segment comprise the probabilities of each topic occurring in that segment. With this information, one can see how a given article changes in topic from beginning to end. For example, the article “Sprache in Psychologischer Rücksicht” in vol. 1, book 1, contains segments with ~80% probability of topic 3 (Philosophy of Language). When the user hovers over one of these segments, the pop-up shows the top three topics of that segment and the sample text. Unlike the previous visualizations, each word of the sample text is tagged with a number corresponding to the topic to which it is most closely associated.

While this visualization may simply function as an index of the corpus, it is also possible to see transitions within a single article, indicating key turning points. For example, see “Etwas aus Robert G…s Lebensgeschichte” in Vol 1, Book 3. Approximately halfway through the article, the dominant topic shifts from ‘heart’ (comprising the various expressions of emotion) toward ‘duration’ (expressions indicating turn of events, such as ‘day,’ ‘long,’ ‘night,’ etc.). In this regard, I find this chart much more instructive about the corpus, and may prove more useful for a researcher aiming to select articles on the basis of certain topical criteria.

Volume-Topic Attribution

Following the logic of the previous chart, this image illustrates the breakdown of topic probabilities by volume. By closely examining this chart, the data lends credibility to the intuition that this journal increases in theoretical reflection near the end of its life. This could be seen in the increasing share of probability given to topic 12, which contains words related to logical inference (e.g. ‘explain,’ ‘object,’ ‘form.’) and the decreasing share attributed to speculative topics, such as topic 2, affects of the soul (‘imagination,’ ‘image,’ ‘impression’) or topic 23, heart (‘heart,’ ‘death,’ ‘unhappiness’).

In some respects, this image gets closer to the proposal that I originally intended for this project, which would track the occurrence of key terms over a ten year period. While this does not single out any particular term, it is possible to generate some hypotheses about the text, which might be useful for further investigation.

Author-Topic Attribution

In a similar vain as the previous image, this shows the breakdown of topic probabilities for each author of the Magazin (Note that there are still some repeated entries that should be accounted for). The most common writers are Karl Philipp Moritz, Carl Friedrich Pockels, Solomon Maimon, and Marcus Herz. In the future, I would like to continue developing this image to provide improved functionality. Here, it might be helpful to implement functions that might allow comparisons relative to the number of articles that each author wrote. That way, it would be easier to infer the authors who made the greatest contributions to the development of a topic.

Topic Author Count

In this final visualization, each topic is measured according to the number of authors associated with this topic. Some topics appear within a small number of authors (8: the Absolute) whereas others exist among a much wider range of writers (11: Military service). Note the occasionally inverse measurements in comparison with the chart Topic Probability. As shown in topics 2 and 8 (Affects of the Soul and the Absolute), some topics contain articles with a high probability of connection and a corresponding small number of authors. Accordingly, topics 10 (mental sickness) and 11 (military service), which both entail the largest number of authors, have a lower range of article probabilities. Thus, this seems to suggest that there are some topics that appear as “catch-alls,” i.e. they convey all those articles that are not easily determined by another, narrower topic, and are therefore defined by the most general of language. This contrasts with those articles that stand out in their uniqueness and are only conveyed by a minority of contributors.

Conclusions and Future Directions

The production and labeling of topics can often be an intriguing process, revealing curious points of interpretation and unique possibilities of further research. However, as with many computational approaches to literature, these methods should be taken with due skepticism and an avoidance of quick generalization. Ultimately, the ‘topics’ I have proposed here are highly dependent upon the initial conditions by which these outputs were generated. If any of these parameters were slightly different, the list of topics could be entirely different, and might suggest an entirely different outcome for the project.

Despite these limitations, I believe this project was successful to the extent that it identified key turning points in the corpus that might otherwise not be easily found. As demonstrated in the images of the Full Database and Topic Probability, it is possible to identify longer segments that transition between topics, as well as texts that offer a more distinct focus than others. Moreover, I am encouraged by the success of measuring authors by their topic compositions. Going forward, it seems possible that one could account for the authorship of a large number of anonymous reports in the Magazin, some of which could be attributed to one of the several major known contributors.

For a more complete understanding of the processes behind this project, I encourage you to visit my github page, where you will find all the relevant code involved in developing these results. Generally speaking, the project consists of two parts: Scraping and Processing. First, in scraping the data, I used the beautifulsoup Python module to collect the text data from the site linked above. This text is then tokenized and stemmed before finally being segmented into text files of roughly equal length. Then, in the processing section, you will find the code responsible for cleaning the text and tagging each word according to its German part of speech. From here, the data should be processed through MALLET, which generates the list of topic keywords and other important tables, such as the relative weight of each word in the model.

Finally, I would be happy to answer any questions that you may have about this work. Also, I welcome any constructive feedback that any experienced contributors may have in advancing this project. Thank you.